Semiconductor design and manufacturing companies are behemoths, housing large departments with multiple functions to feed into the multiple stages of the semiconductor value chain. To manage and access the enormity of data and information that resides within a single organization requires a great amount of man hours that still fail to address the need for ready accessibility.

This is a problem with a no-brainer solution – an AI Agent. And that’s exactly how several semiconductor design and manufacturing companies, who each have their own AI teams, are tackling the problem. An Agent accesses relevant information from its data sources and summarizes an answer for the user along with its sources.

Many Agents for Many Problems

In the case of semiconductor companies with a very long and complex value chain, every stage can consist of several tools, resources and data sets that are specific to the engineers within that function alone. So, companies look at building Agents for specific functions or tools to help engineers or users of a very targeted part of the value chain.

These applications for AI agents are so varied but the case for multiple agents configured for each use case specifically is also important to ensure data security and accuracy. So, a behemoth semiconductor design and manufacturing company would have to have many, many Agents in that case… so what’s the best course of action?

A Framework to Develop and Deploy Agents

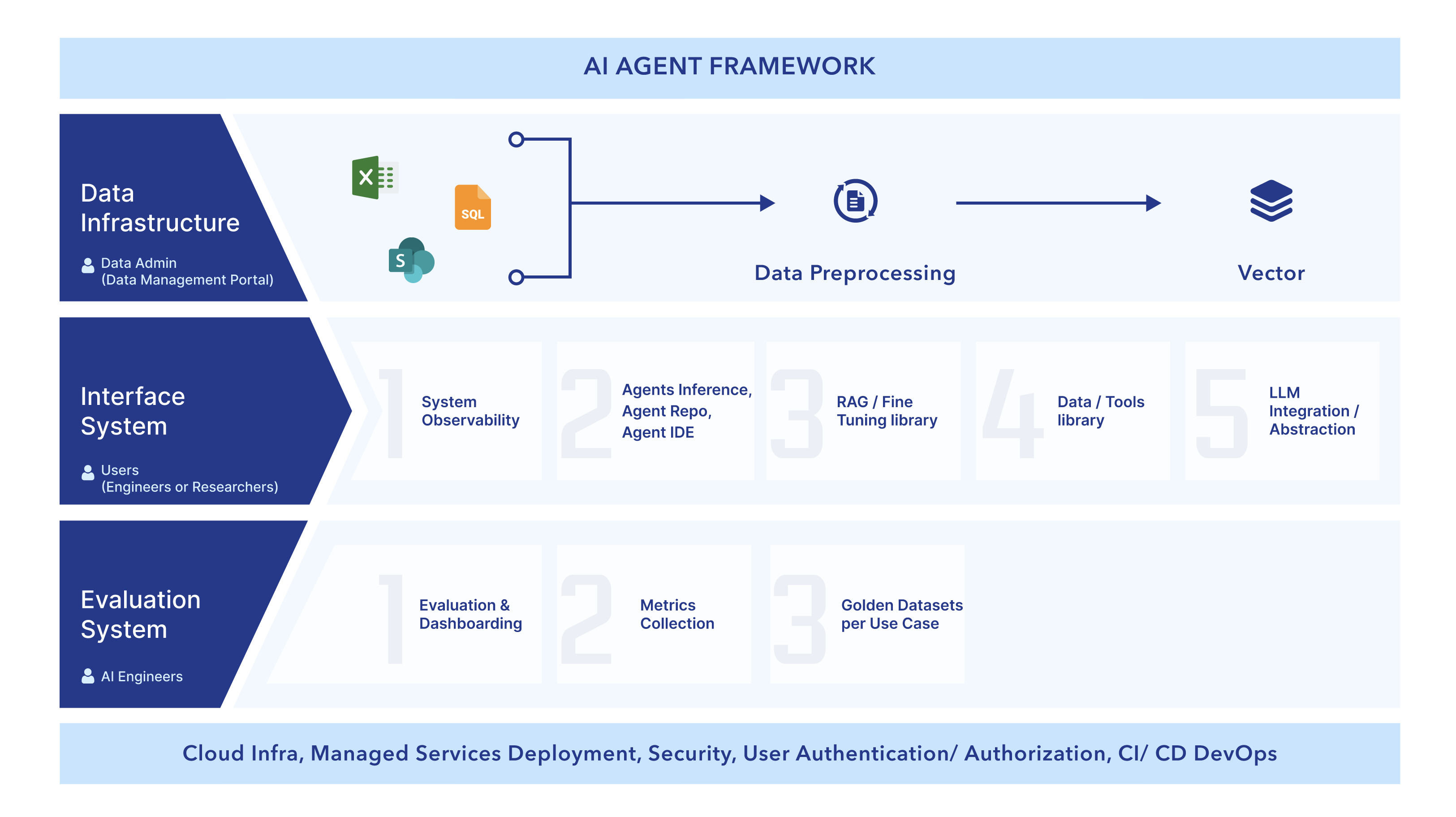

There are key pieces that make a framework-driven approach to repeated development and deployment of Agents the most effective, comprehensive and secure way to move forward.

The first element is the data infrastructure. Data needs an infrastructure because even leading semiconductor companies have data ‘all over the place’. Data is not categorized and exists in both structured and unstructured forms – from PDFs to databases and everything in between. The data infrastructure preprocesses data to make it usable while maintaining data security and access that already exists. Data infrastructure follows the strategic framework of effective data governance policies that establish standards and processes to maintain data accuracy, consistency, and completeness, reducing the risk of biases and errors in AI outcomes. Implementing clear data governance policies enhances transparency, streamlines data management processes, leading to more efficient AI development and deployment.

The next element is the inference system made up of several pieces that work in tandem or independently based on the use case of the Agents. The inference system will include several elements that power different requirements based on the use case of an Agent.

- An LLM abstraction: Not necessarily attached to a single LLM, this piece can bring in various LLMs to leverage different strengths – an important requirement with the way developments and updates are so fast-paced.

- A library of tools: Allowing an Agent to leverage for various tasks or actions like say, a data visualization tool.

- RAG/ fine-tuning library: This gives the Agent a host of techniques that substantially improves its performance.

- An Agent inference system or agent repo: A repository of source code and configurations that run the Agents.

- System Observability: This part of the system allows real-time observation of various factors that can help monitor and debug the system and ensure the models and infrastructure are working accurately.

The last and crucial element to the infrastructure is the evaluations system that makes sure the Agents are performing as per expectation based on a golden dataset for each use case and evaluation metrics defined for the use case. The evaluation system can bring up metrics and performance to any changes made in the inference system across the lifecycle of the entire infrastructure.

Across the framework and its applications for Agents, there are different sets of users for whom an experience through a web interface is developed, conducive to the user persona. Lastly the framework is supported on a cloud infrastructure with managed services, security, user authorization and authentication and CI/CD DevOps.

This framework allows an organization to configure and launch an Agent within weeks for any use case.